You are about to spend money on AI. The question is how much — and whether the difference is real.

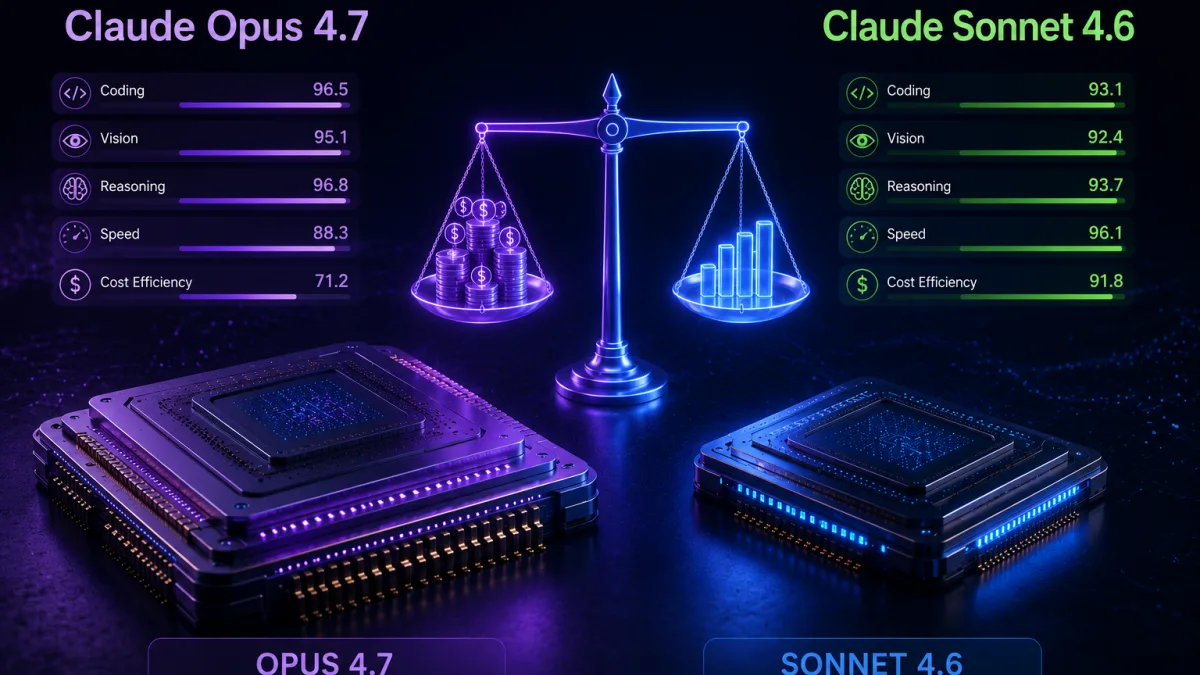

Anthropic has two flagship models available right now. Claude Opus 4.7 launched on April 16, 2026 as the most capable AI model the company has ever released to the public. Claude Sonnet 4.6 launched two months earlier and immediately redefined what a mid-tier AI could do.

The price gap between them is 40%. The performance gap on most tasks is under 8%. Here is exactly when that gap matters — and when it does not.

💡 Did you know? In developer testing, Claude Sonnet 4.6 was preferred over the previous flagship Opus 4.5 model 59% of the time — meaning a mid-tier model beat the prior generation's best. That result alone tells you how dramatically AI value equations have shifted in 2026.

What You Are Actually Comparing

Before the benchmarks, understand what each model is designed for.

Sonnet 4.6 and Opus 4.7 are not really competing for the same use case. Sonnet 4.6 is the answer to "what should I run in production by default?" Opus 4.7 is the answer to "what do I reach for when the task is too hard for anything else?" Waqar Azeem

Claude Sonnet 4.6 launched February 17, 2026. It was the first Sonnet model to surpass the previous generation's Opus in coding — a milestone that permanently changed how developers think about which model tier to use. It costs $3 per million input tokens and $15 per million output tokens.

Claude Opus 4.7 launched April 16, 2026. It is Anthropic's most capable generally available model, with a step-change improvement in agentic coding over Claude Opus 4.6. It costs $5 per million input tokens and $25 per million output tokens. Miraflow

The 40% price difference on paper becomes more complex in practice — because Opus 4.7 ships with a new tokenizer that produces up to 35% more tokens for the same text. Your real bill can be higher than the rate card suggests.

The Benchmark Numbers — What They Actually Mean

Here is how both models perform on the benchmarks that matter most:

Coding performance — SWE-bench Verified SWE-bench Verified jumps from 80.8% to 87.6% — a nearly 7-point gain that puts Opus 4.7 ahead of Gemini 3.1 Pro at 80.6%. Sonnet 4.6 scores 79.6% — within 8 points of Opus 4.7 and ahead of most competitor flagship models. Medium

For practical daily coding work — writing functions, fixing bugs, implementing features — that 8-point gap is nearly invisible. Both models handle standard coding tasks at a quality level that most developers cannot distinguish in practice.

Complex agentic coding — SWE-bench Pro This is where the gap opens up. On SWE-bench Pro, the harder multi-language variant, Opus 4.7 goes from 53.4% to 64.3%, leapfrogging both GPT-5.4 at 57.7% and Gemini at 54.2%. Sonnet 4.6 sits around 53% on this harder benchmark. For multi-file autonomous agents, Opus 4.7 is genuinely better. Medium

Vision and image processing Opus 4.7 has better vision for high-resolution images: it can accept images up to 2,576 pixels on the long edge, more than three times as many pixels as prior Claude models. Sonnet 4.6 handles standard image tasks well but cannot match Opus 4.7 on dense screenshots, complex diagrams, and technical visual work. Waqar Azeem

Computer use and GUI automation Both models score nearly identically on OSWorld-Verified — Sonnet 4.6 at 72.5%, Opus 4.7 at 78.0%. Since output is virtually the same, Sonnet is the obvious choice on cost alone for standard computer use tasks. CompanionLink

💡 Did you know? On CursorBench — which tests real coding inside an IDE environment — Opus 4.7 scores 70% versus Opus 4.6 at 58%. Early access partner Cursor confirmed four tasks that neither Opus 4.6 nor Sonnet 4.6 could solve at all were resolved by Opus 4.7. These are the tasks that justify the premium.

The Real Pricing Math

The rate card says Opus 4.7 is 40% more expensive than Sonnet 4.6. The reality is more complicated.

Opus 4.7 ships with a new tokenizer that can produce up to 35% more tokens for identical input text. This means a request that cost $0.10 on Opus 4.6 could cost $0.10 to $0.135 on Opus 4.7 — even though the rate per token did not change.

Here is what the same workload actually costs across both models:

| Workload | Sonnet 4.6 | Opus 4.7 | Real difference |

|---|---|---|---|

| 1M input + 200K output per day | $6/day | $10/day | +67% |

| Monthly at that rate | $180/month | $300/month | +$120/month |

| Heavy agent (10M input + 2M output) | $1,800/month | $3,000/month | +$1,200/month |

| With 90% prompt caching | $18/month | $30/month | Both affordable |

| 1,000 daily requests (10K tokens each) | $180/day | $300/day | Sonnet saves $43,800/year |

The prompt caching row is the most important. For workloads with stable system prompts and repeated document context, caching reduces costs by up to 90% on both models — making the absolute price difference much smaller in practice.

When Opus 4.7 Is Worth Every Penny

There are specific workloads where Opus 4.7 earns its premium — and the gap between the models is large enough to matter.

Autonomous coding agents Task budgets, improved file-system memory, and 128K output tokens make Opus 4.7 the best Claude model for agentic workflows. If you are building agents that autonomously write, test, and fix code across multiple files without human intervention, Opus 4.7's 87.6% SWE-bench score versus Sonnet's 79.6% translates to meaningfully fewer failed runs and less retry overhead. Getalai

High-resolution vision work Reading dense screenshots, extracting data from complex diagrams, processing multi-page technical documents with charts — the resolution jump in Opus 4.7 is a meaningful unlock rather than a spec sheet detail. One early-access partner saw visual acuity jump from 54.5% to 98.5% for autonomous penetration testing. Waqar Azeem

Expert-level professional reasoning Opus 4.7 is state-of-the-art on GDPval-AA, a third-party evaluation of economically valuable knowledge work across finance, legal, and other domains. For legal review, financial modeling, and scientific research where accuracy on professional documents is the primary requirement, Opus 4.7 operates at a different level. Waqar Azeem

When failure costs more than tokens A single agent call that fails on Sonnet 4.6 and requires retry logic, fallback handling, or manual intervention can cost 3–5x more than routing that task to Opus 4.7 upfront. One real-world example: failure and retry costs exceeded $10K per month on Sonnet-only deployments, reduced to $1K per month with smart Opus routing. True savings: $9,000 per month.

💡 Did you know? Opus 4.7 is also 15-20% faster on average than Opus 4.6 despite being more capable — making the speed-versus-quality tradeoff between Opus 4.7 and Sonnet 4.6 much smaller than it was in the previous generation.

When Sonnet 4.6 Is the Right Answer

Claude Sonnet 4.6 is the best Claude model for most users as of April 2026. It scores 79.6% on SWE-bench Verified — within 1.2 points of Opus 4.6 — while costing 40% less and running 17% faster. Monu

For the majority of production workloads, Sonnet 4.6 delivers results that are genuinely indistinguishable from Opus in practice:

Standard coding tasks — writing functions, fixing bugs, implementing features, writing tests. The 8-point benchmark gap does not show up meaningfully in daily work.

RAG and content generation — for retrieval-augmented generation, customer-facing chat, and content production, Sonnet 4.6 produces equivalent quality at 40% lower cost.

Computer use and GUI automation — at 72.5% versus Opus 4.7's 78.0%, the quality difference does not justify the price premium for most automation tasks.

High-volume production traffic — at enterprise scale, routing everything through Sonnet 4.6 instead of Opus saves $43,800 or more annually per 1,000 daily requests.

Blog writing, email drafting, analysis, summarisation — prose quality between the two models is essentially identical. There is no reason to pay Opus prices for content generation.

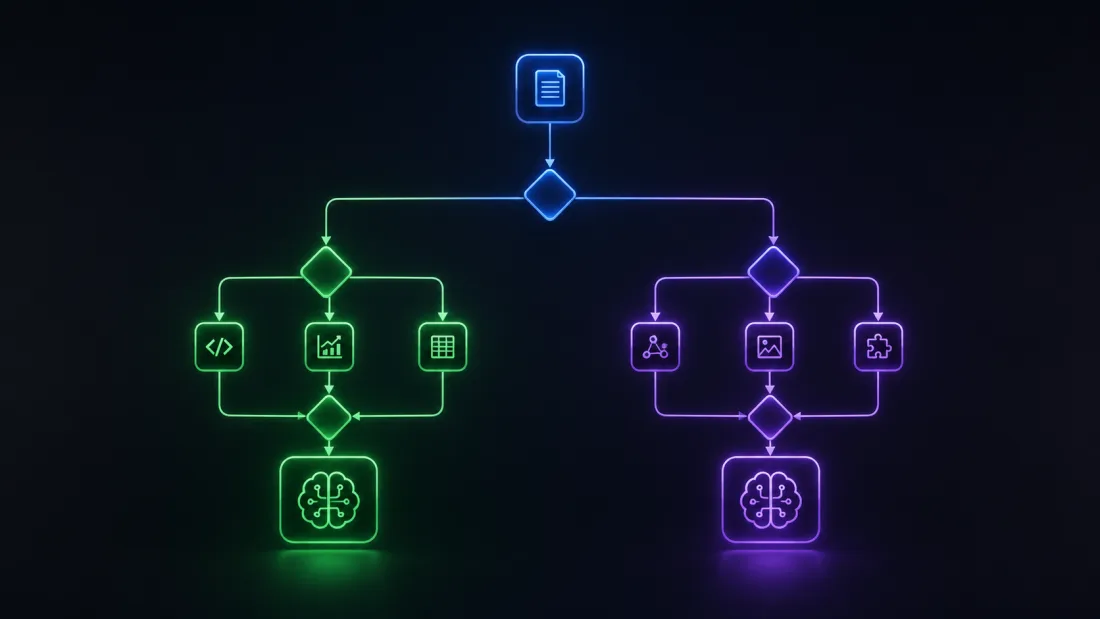

The Smart Strategy — Use Both

The optimal approach in 2026 is not choosing one model. It is routing each task to the right model. Getalai

The practical framework that works at scale:

| Task type | Model | Why |

|---|---|---|

| Bug fixes, feature implementation | Sonnet 4.6 | 98% of Opus quality, 40% cheaper |

| Computer use / GUI automation | Sonnet 4.6 | Scores within 6 pts at fraction of cost |

| Content generation, chat, RAG | Sonnet 4.6 | Identical quality in practice |

| Autonomous multi-file coding agents | Opus 4.7 | Meaningful quality gap justifies cost |

| High-resolution vision / screenshots | Opus 4.7 | 3x resolution advantage is real |

| Legal review, financial analysis | Opus 4.7 | Document reasoning 21% fewer errors |

| Hard problems that Sonnet fails | Opus 4.7 | Retry costs exceed Opus premium |

A 90/10 routing split (90% Sonnet, 10% Opus 4.7) reduces your Claude API bill by roughly 70% compared to running everything on Opus 4.6, while capturing most of Opus 4.7's quality gains where they matter most. Getalai

One More Thing — Claude Mythos

Both Opus 4.7 and Sonnet 4.6 exist below Anthropic's most powerful model: Claude Mythos Preview, released April 7, 2026 under Project Glasswing. Mythos scores 93.9% on SWE-bench — 6 points above Opus 4.7 — and represents the largest single capability jump Anthropic has ever shipped.

The catch: Mythos is not available to the public. Access is restricted to nine Project Glasswing partners — AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, Microsoft, and Nvidia — for defensive cybersecurity work only.

For everyone else in 2026, the choice is Opus 4.7 versus Sonnet 4.6 — and the framework above is how to make that decision correctly.

✅ TechPopDaily Verdict

Sonnet 4.6 is the right default for 80-90% of AI workloads in 2026. The benchmark gap with Opus 4.7 is real but narrow on most tasks — and the 40% price difference (plus the tokenizer inflation) makes Sonnet the obvious choice for anything that does not specifically require Opus-level performance. Use Opus 4.7 when you are building autonomous coding agents, processing high-resolution visual content, doing expert-level professional document work, or running tasks where a single failure costs more than the token premium. For everyone else — content creation, standard coding, chat, analysis, RAG — Sonnet 4.6 delivers 98% of the performance at a fraction of the cost. The smart move is not choosing one. It is routing intelligently between both.

Frequently Asked Questions

Is Claude Opus 4.7 worth the extra cost over Sonnet 4.6? For most users, no. Sonnet 4.6 delivers 79.6% on SWE-bench versus Opus 4.7's 87.6% — a meaningful gap only for the hardest coding tasks. For standard coding, content generation, and chat, Sonnet 4.6 is indistinguishable from Opus in practice and costs 40% less per token.

What is the actual price difference between Opus 4.7 and Sonnet 4.6? On the rate card: Opus 4.7 at $5/$25 per million tokens versus Sonnet 4.6 at $3/$15. In practice, Opus 4.7's new tokenizer produces up to 35% more tokens for identical text — meaning your real bill can be 67-80% higher than Sonnet for the same workload.

What is Opus 4.7 significantly better at? Opus 4.7 pulls decisively ahead on three areas: autonomous multi-file coding agents (87.6% vs 79.6% SWE-bench), high-resolution vision (3x pixel resolution advantage), and professional document reasoning (21% fewer errors on legal and financial documents). For tasks outside these areas, the quality gap narrows significantly.

Should I use Sonnet 4.6 or Opus 4.7 for coding? Default to Sonnet 4.6 for standard coding — bug fixes, feature implementation, tests, documentation. The 8-point SWE-bench gap does not show up in daily coding work. Switch to Opus 4.7 only for autonomous agents doing complex multi-file refactors or architecture-level decisions.

What is Claude Mythos and how does it compare? Claude Mythos Preview is Anthropic's most powerful model, released April 7, 2026 under Project Glasswing. It scores 93.9% on SWE-bench — 6 points above Opus 4.7. However it is not publicly available. Access is restricted to nine enterprise partners for defensive cybersecurity work only. Neither Opus 4.7 nor Sonnet 4.6 comes close to Mythos on the hardest benchmarks.